Why AI make up things and how you can control it.

‘In February 2024, a tribunal in Canada held Air Canada liable for a discount offered by its AI chatbot offered which did not existed as per company policy.’

‘Forbes reported that, a University study, found that at times LLM powered coding tools added or hallucinated references to packages that did not exist. About 20% of the code samples examined across 30 different Python and JavaScript LLM models returned references to non-existent packages. These introduced vulnerabilities in software supply chain which would be used by hackers.’

These are not just chatbot or AI malfunction — but warning signs. What happens when AI provides false information with complete confidence which people trust?

So, What is an AI Hallucination?

AI hallucination refers to a phenomenon where AI systems particularly Generative AI tools generate outputs that are misleading, incorrect or fabricated. Often humans trust these output as truth which is then used to take decisions. At times this can be harmless while at times it can have serious repercussions.

Consider a AI powered medical system recommending an incorrect prescription, or a business chatbot misleading customers. These scenarios highlight why AI hallucinations are not just a technical flaw but a real-world problem which AI professionals, Cybersecurity experts, AI ethics thought leaders and domain experts must address.

How AI’s Wild Imagination Hurting Us

AI-generated misinformation can have implications across most of the aspects of our lives, society and the economy:

- Misinformation Spread: AI can generate false news or misleading statements, may spread confusion among readers, viewers or listeners. With increasing usage of Generative AI in content generation this is a problem.

For example, in October 2024, a lawsuit by Dow Jones and New York Post against a popular AI organization argued that hallucinating fake news and attributing it to a real paper was illegal and potentially confused readers.

- Legal and Ethical Risks: AI providing inaccurate legal or medical advice can lead to real harm.

In the case of Gauthier v. Goodyear Tire & Rubber Co., a Texas attorney, Brandon Monk, faced significant legal repercussions for relying on generative AI to draft a legal brief that included citations to non-existent cases and fabricated quotations.

- Business Reputational Damage: Companies using AI-powered chatbots risk misinforming customers, eroding trust, and facing potential lawsuits. For example, the

Air Canada case mentioned in the introduction highlights how the discounts offered by the chatbots can cause business and reputational damage.

- Security Risk: AI in cybersecurity may generate false alarm for non-existent threats. In the introduction we have mentioned how AI hallucination can generated codes with non-existent references thus causing software vulnerabilities.

- Bias and discrimination: AI hallucinations can lead to reinforced stereotypes, and flawed decision making affecting a particular class or group.

For example, it was reported in 2019 that a widely used healthcare prediction algorithm was biased against black Americans revealing significant racial bias.

Why Does AI Make Up Things?

AI does not know everything in advance, rather it learns from data and then predicts. So AI hallucinations have to do with issue during training data and methodology or lack of fact checking during generation. There are several reasons why it may produce misleading information.

1. Incomplete or Biased Training Data

AI learns from the data it is trained on. If that data is inaccurate, incomplete, or biased, AI may generate misleading or completely false responses.

Example: A medical AI trained only on Western healthcare data might misdiagnose a disease prevalent in India due to a lack of relevant training.

2. Overfitting

Overfitting happens when an AI model learns too much from its training data—so much that it memorizes specific details rather than understanding general patterns. At times the model learns not just the details but the noises as well. Due to the overlearning, the model fails to generalize its knowledge and applies irrelevant patterns when making decisions or predictions.

Example: A stock prediction AI trained on 10 years of data may learns to recognize patterns, but instead of spotting real trends, it may memorizes random fluctuations. When applied to real trading, it fails because the patterns it relied on were just coincidences, not reliable predictors.

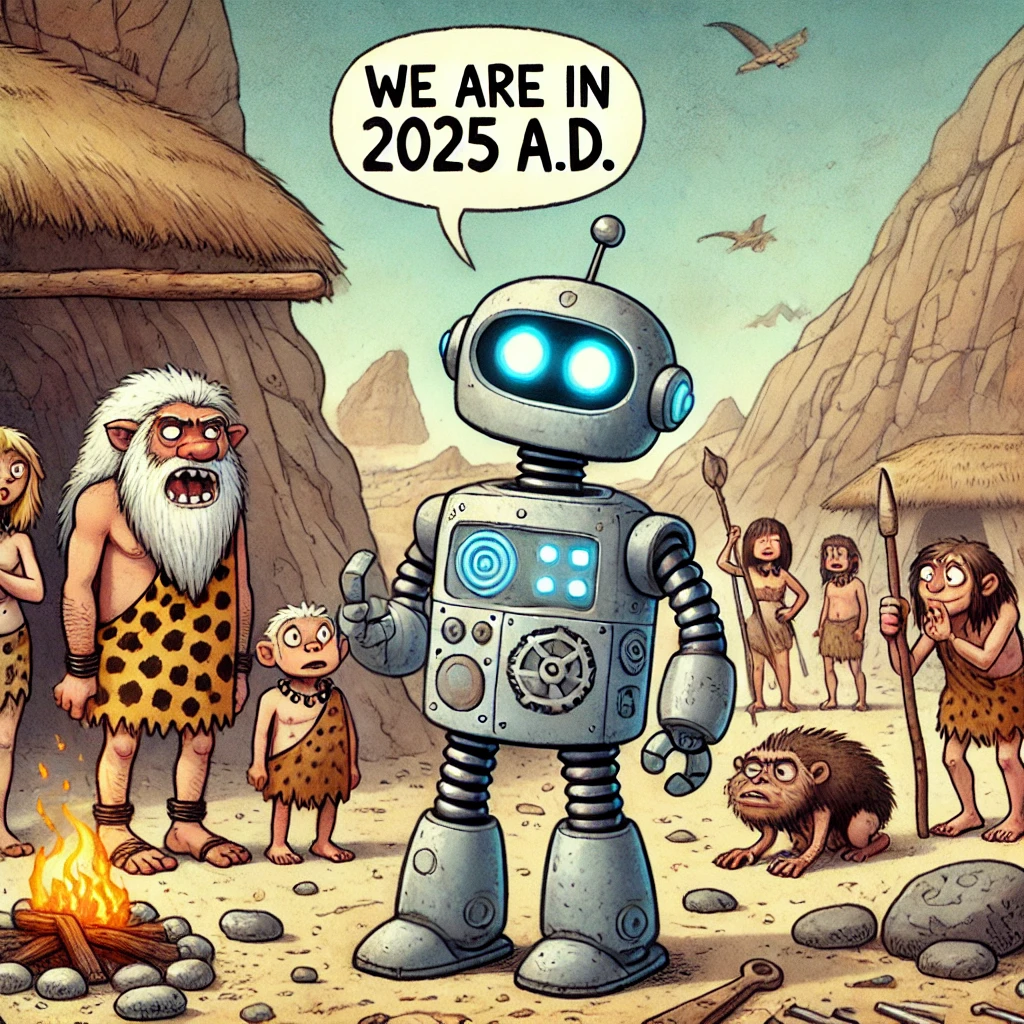

3. Outdated Knowledge

Many AI models have a knowledge cut-off date, meaning they are unaware of events that occurred after their last update.

Example: An AI trained in 2021 may still claim Joe Biden is the current U.S. president in 2025.

4. No Real-Time Fact-Checking

Most AI models generate responses based on pre-existing training data rather than verifying facts in real time.

Example: A legal AI assistant may provide outdated tax laws if it does not pull the latest information from government websites.

5. Concept Merging

AI sometimes incorrectly merges unrelated concepts due to their frequent association in its training data.

Example: If asked, “What is the connection between Shakespeare and skateboarding?” AI may generate an entirely fictional link based on unrelated correlations.

6. Adversarial attacks

Adversaries can use multiple tactics to confuse the AIs like prompt injection, data poisoning etc.

Example: Imagine an AI model trained to generate news articles. A malicious actor manipulates its training data by injecting thousands of fake articles containing false political narratives. Over time, the AI “learns” these fabricated stories as real events.

So, How to Keep AI’s Hallucination in Check

While AI hallucinations cannot be eliminated entirely, several approaches can help mitigate the risk.

1. Improve Training Data

Training AI models are on high-quality, diverse, and reliable datasets reduces the likelihood of hallucinations.

Example: A medical system implemented globally and trained on diverse data from different ethnicity, gender, race, geography may hallucinate less than the one trained only on a particular race, ethnicity, gender or geography.

2. Use Retrieval-Augmented Generation (RAG)

RAG systems combine real time information retrieval with AI-generated content thus improving accuracy. Instead of relying on pre-trained models, the RAG system can retrieve relevant information from an organization’s repository and use that to generate content using Generative AI thus producing more reliable content.

Example: A RAG-powered chatbot can search the company policy documents about returns and refunds. It can use this information to generate proper response for customer queries about refunds.

3. Human Oversight (HITL – Human in the Loop)

AI should not operate without human supervision in high-risk areas such as medicine, law, or finance.

Example: A recruitment AI screening resumes should have final human review to ensure fair hiring practices.

4. Fine Tuning Models

Fine tuning is the process of training a pre-trained AI model on specialised data to improve its accuracy for a specific task or domain. This increases the reliability of AI and reduces hallucinations.

Example: An AI model fine-tuned on legal data can provide better responses related to Legal aspects as compared to a general pre-trained Large Language Model.

5. Ground Responses in Verifiable Data

AI should be designed to provide responses backed by sources rather than generating speculative or overly generalized statements.

Example: Now a days some chatbots based by popular LLMs don’t just generate text responses but also provide sources and references backing their responses.

6. Enable User Feedback and Continuous Learning

Allowing users to provide feedback on AI responses helps models refine and improve accuracy over time.

Example: A customer service chatbot allowing users to report incorrect responses for review and refinement.

6. Securing AI

Improving AI security improves AI hallucinations by preventing unauthorized data manipulations, adversarial attacks, and data poisoning. Internet is full of information related to AI security. However, OWASP Top 10 Risks and Mitigation for LLMs and GenAI applications can be a good starting point.

Though the risk of AI hallucinations cannot entirely be eliminated but the methods outlined above can greatly reduce them.

Final Thoughts: Keeping AI’s Imagination in Check

AI is a powerful tool, but it has a tendency to “make up things” when it encounters gaps in knowledge. While some AI hallucinations are harmless, others can lead to legal, ethical, and financial consequences.

By improving training data, incorporating fact-checking mechanisms, fine tuning models and ensuring human oversight, organisations can reduce AI hallucinations and build more trustworthy, responsible AI systems.

As AI continues to evolve, businesses and individuals must stay aware of these risks—because when AI confidently delivers false information, the stakes can be high.