From Prototype to Production: Architecting Enterprise Grade AI Agents Is Hard

AI Agents have evolved rapidly, from simple assistants in lab to autonomous multi-AI agents in production taking autonomous actions based on user provided objectives. They’re now powering research, customer service, and operations across industries. However, building a prototype is easy but scaling it to production is where most teams struggle.

According to LangChain’s ‘State of AI Agents‘ 2024 report, 51% of organisations now run AI agents in production, but performance quality, cost overrun, safety concerns and latency remain their top concerns. As AI agent’s become more complex, improper design can lead to escalating risks such as hallucinations, errors, soaring costs, increased latency, and ethical challenges.

Creating enterprise grade AI agents require more than just effective prompt engineering and demands comprehensive optimisation across the entire system stack. This 10 minutes guide reveals the best architectural patterns and design principles to build agents that are effective, fast, cheap and ethical, making them reliable and trustworthy for enterprise workflows.

Characteristics of Enterprise-Grade AI Agents

Scaling an AI agent from a lab prototype to a enterprise-grade system is far more challenging than it appears. While prototypes can impress in controlled settings, a production environment can introduce demanding constraints: cost control, low-latency performance, reliability under diverse conditions, and ethical governance. Without a deliberate approach, teams risk deploying agents that are expensive to run, slow to respond, inconsistent in quality, or misaligned with compliance requirements.

Building agents that can scale and operate reliably in production requires a balanced focus on four interconnected pillars:

Figure: Qualities of Enterprise Grade AI Agents

- Operational Effectiveness: Deliver accurate, contextually relevant outputs with minimal errors and hallucinations, ensuring trust and dependability.

- Execution Speed: Maintain low latency and quick response times so agents can handle real-time or near-real-time workflows without bottlenecks.

- Cost Efficiency: Achieve a low Total Cost of Ownership (TCO) through optimised architecture, resource management, and simplified maintenance.

- Ethical Compliance: Embed fairness, safety, and adherence to regulations into the system’s design, ensuring responsible AI behaviour at scale.

These pillars are not optional add-ons but they form the foundation for AI agents that can scale effectively while remaining reliable, sustainable, and aligned with business and societal expectations.

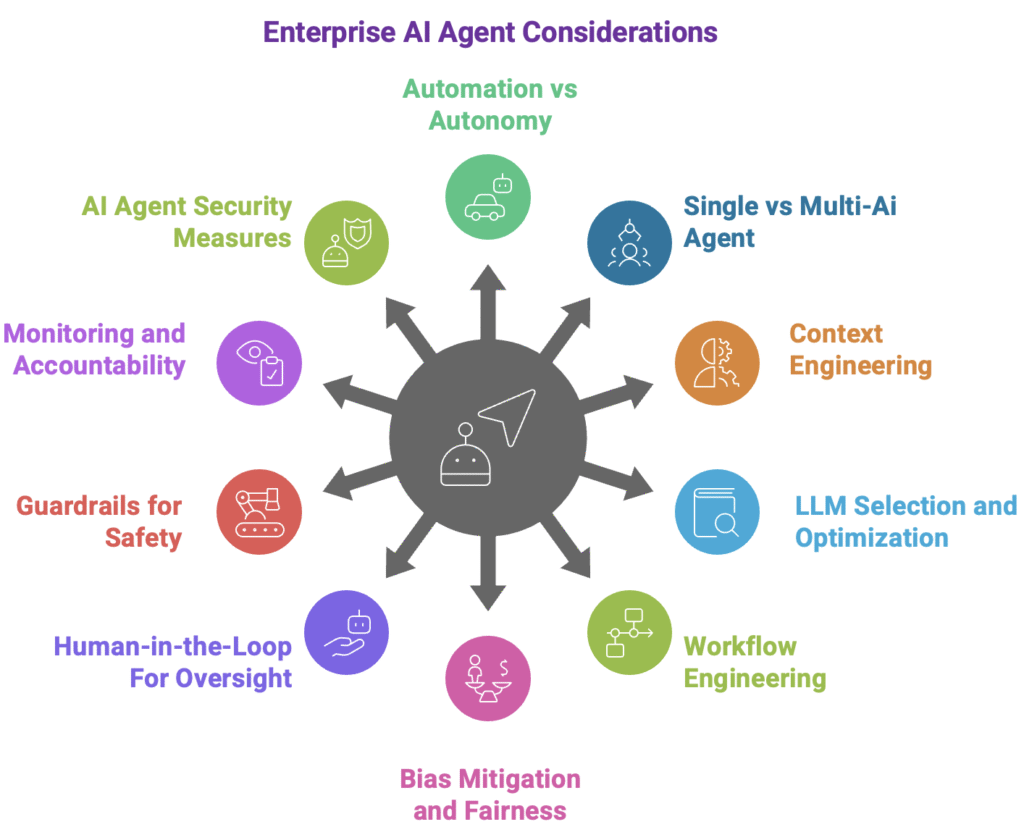

Top 10 Design Considerations for Enterprise Grade AI Agents

Building scalable enterprise grade AI agents is about intentional design across architecture, performance optimisation, cost management, and ethics.

The following ten design considerations distill lessons from leading AI frameworks, multi-agent coordination research, and enterprise deployments. Each principle aligns with one or more of the four pillars, Operational Effectiveness, Execution Speed, Cost Efficiency, and Ethical Compliance, to ensure agents remain accurate, responsive, sustainable, and responsible at scale.

They are not isolated tips but inter-connected design considerations. A single choice, for example LLM selection, can affect multiple aspects like effectiveness, cost, speed and ethical risks. The key is to approach design holistically, balancing trade-offs so that no single pillar is compromised at the expense of another.

Figure: Design Considerations for Enterprise AI Agents

1: Balancing Automation and Autonomy in AI Agent Design

Enterprise-grade AI agents exist on a spectrum between automation (predefined, rule-based actions) and autonomy (adaptive, goal-driven decision-making driven by AI Language models).

Tradeoffs:

Both Automation and autonomy have their own strengths:

- Automation Advantages: Automation delivers speed, predictability (hence low hallucination), and low cost execution.

- Autonomy Advantages: Autonomy enabled natural language queries, flexibility, and dynamics problem solving in complex and unpredictable scenarios.

Negative impact of both should be considered while designing the AI agents:

- Too much automation: Agents fail in dynamic contexts due to lack of adaptability.

- Too much autonomy: Higher latency, cost spikes, increase in bias and potential compliance risks.

The right balance maximizes adaptability while safeguarding performance, efficiency, and safety.

Practical Strategies:

- Use Case Based Routing: High risk, repetitive and predictable tasks should be routed towards automation driven modules. Automation should be used for dynamic, ambiguous and tasks requiring interpretation with tolerable risk.

- Set Automation Boundaries: Establish decision boundaries (e.g., financial limits, risk level, compliance triggers etc.) for autonomous agents. Based on intent recognition if the agent decides the boundaries are crossed then automation or fall back logic should be used.

- Configure fallback actions: When the AI agent is not able to decide between automation and autonomy then it should be able to use a fallback logic. Example of such actions can be human in loop, safe automation, asking user to give more details etc.

- Feedback Loop: Collect user feedbacks to identify use cases for automations and autonomy

- Progressive Autonomy: Start with automation and gradually based on experience increase the level of autonomy

2. Choosing Between Single-Agent and Multi-Agent Architectures

Selecting between a single-agent and a multi-agent architecture is one of the most critical design decisions when building enterprise-grade AI agents. Each approach offers unique advantages and trade-offs that impact effectiveness, speed, cost, and maintainability.

| Aspect | Single-AI Agent | Multi-AI Agent Systems |

| Context Management | Context is unified and no information is lost between the execution steps | Distributed; risk of fragmentation, but better domain separation. |

| Context Window | Limited by model; disadvantage for longer workflows | Split across agents; extends effective context; suitable for longer multi-step workflows |

| Speed | Faster for sequential tasks. | Faster for parallel execution. |

| Cost | Lower token use | Higher token use |

| Errors | Fewer hallucinations; more predictable | Error cascades possible; higher hallucination risk |

| Maintenance | Easier for simple workflows | Easier for modular systems |

Table: Single-AI Agent Systems vs Multi-AI Agent Systems

Bottom Line:

- Choose single-agent systems for simpler, unified-context workflows where speed and cost efficiency matter most.

- Opt for multi-agent architectures when workflows require domain separation, modularity, multistep workflows or parallel processing at scale.

3. Context Engineering for Reliable AI Agents

In AI agents, context refers to the information available to the model at the time it generates a response. This can include the user’s latest query, prior conversation history, relevant documents, system instructions, and even environmental or operational data. In simple terms, context is everything the AI “knows” at the moment of decision-making, and the quality, relevance, and structure of this context largely determine the accuracy, speed, and cost-efficiency of the agent’s output.

Effective context engineering ensures that the agent gets the right information, in the right format, at the right time, without being overloaded with irrelevant or redundant data. Following are the types of context:

- Static Context: Always-present elements like role definitions, domain rules, compliance constraints, and brand tone.

- Dynamic Context: Changing information such as retrieved documents, conversation history, or real-time operational states.

Separating these ensures stability without bloating the prompt.

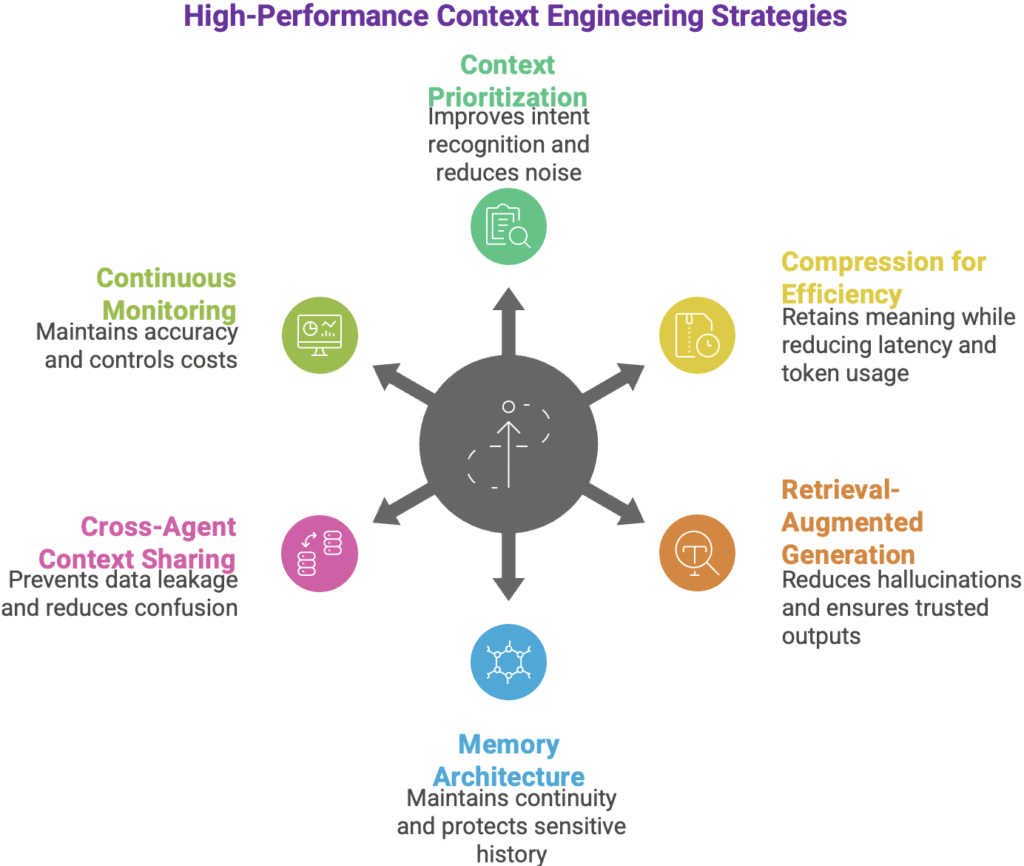

Core Strategies for High-performance context engineering

Figure: Context Engineering for Reliable Agents

i. Context Prioritization & Filtering

Not all retrieved or stored information should be sent to the AI agent in every request. High-performance systems rank and filter context based on semantic relevance (how closely it matches the user’s query), recency (how fresh the data is), and domain scope (whether it belongs to the current topic). This avoids overloading the agent with irrelevant or noisy information. Following are the impacts

- Effectiveness: Improves intent recognition.

- Speed: Less to process means faster output.

- Cost: Reduces unnecessary token use.

- Security: Automatically removes unrelated sensitive data.

ii. Compression for Efficiency

When valuable context exceeds the model’s input limit, compression techniques ensure essential meaning is retained. This includes summarizing long passages or historical conversations, extracting key facts and entities, and clustering similar information into compact forms. Following are the impacts

- Effectiveness: Retains meaning in tight context windows.

- Speed: Smaller inputs process faster.

- Cost: Lower token usage.

iii. Retrieval-Augmented Generation (RAG)

Ground the agent’s responses in enterprise knowledge by retrieving the most relevant documents from internal databases or vector stores before each request.

- Effectiveness: Reduces hallucinations by anchoring answers in verified sources.

- Speed: Targeted retrieval avoids full-corpus searches.

- Cost: Optimized retrieval cuts redundant LLM calls.

- Ethics/Security: Ensures outputs align with approved information sources.

iv. Memory Architecture

Design layered memory, short-term (session), long-term (vector), hybrid recall, and fetch only relevant, authorized entries.

- Effectiveness: Maintains conversation or workflow continuity without reintroducing irrelevant past data.

- Cost: Optimized retrieval avoids unnecessary LLM calls

- Security: Limits exposure of sensitive history.

v. Cross-Agent Context Sharing

In multi-agent systems, use protocols like A2A or MCP to share minimal, relevant slices while enforcing domain separation.

- Effectiveness: Reduces confusion.

- Security: Prevents data leakage.

vi. Continuous Monitoring & Optimization

Track retrieval accuracy, prompt length, token spend, and context quality; refine regularly. Also watch for agent drift/shift, where outputs deviate from intended behavior.

- Effectiveness: Sustains accuracy over time.

- Cost: Keeps operations efficient.

- Ethics/Security: Detects bias and sensitive data leaks early.

Bottom Line:

Context is the lifeblood of AI agents. By combining prioritization, compression, RAG grounding, smart memory, and controlled sharing, enterprises can achieve high accuracy, low latency, predictable costs, and strong safety/compliance in production-grade AI agents.

4. LLM Selection, Distillation, and Quantization Strategies

LLM is the brain of AI agents, powering their ability to understand language, reason over complex scenarios, and generate contextually relevant responses. Designing enterprise-grade AI agents starts with choosing the right Large Language Model (LLM) for the job. Following are the key strategies to create effective, fast and cheap AI agents

i. LLM Selection

Large models (e.g., GPT-4) can be used for sophisticated reasoning ability and for complex, ambiguous tasks. Smaller or domain-tuned models can be leveraged for faster, cheaper, ideal for predictable workloads. Following can be the impact of this approach:

- Effectiveness – Right model for the right job improves accuracy.

- Speed – Smaller models reduce response time.

- Cost – Lowers operational expenses.

ii. Model Distillation

This involves training a smaller “student” model to mimic a larger “teacher” model’s behavior. This preserves task-critical accuracy while reducing compute overhead. Following are the impacts:

- Effectiveness – Keeps quality where it matters.

- Speed – Smaller model = faster inference.

- Cost – Reduced hardware and cloud compute usage.

iii. Low-Rank Adaptation (LoRA) Fine-Tuning

LoRA is a low-cost fine-tuning method that adds small trainable matrices to a large model without changing its core weights thus avoiding full retraining. Following are they impact on scalability:

- Effectiveness – Improves domain relevance.

- Cost – Avoids full fine-tune costs.

iv. Quantisation

Quantization is reducing a model’s numerical precision to lower memory use and speed up inference with minimal accuracy loss. This process may result in minimal quality loss but can have following benefits:

- Speed – Faster response for real-time tasks.

- Cost – Runs on cheaper hardware.

5. Workflow Engineering for Effective Task Completion

In enterprise-grade AI agents, workflow engineering determines whether an agent can reliably complete complex tasks end-to-end (both LLM processing and task execution outside LLM). It’s not enough for an agent to respond accurately, it must follow a clear execution strategy, integrate with the right tools, and adapt to evolving situations without losing context or control.

Figure: Workflow Engineering for AI Agents

i. Choosing the Right Reasoning and Planning Strategy

Following are the 2 key strategies leveraged in AI agents for reasoning and planning:

- ReAct (Reason + Act): It combines thinking and action in iterative loops, ideal for exploratory or adaptive workflows.

- Plan-Act/Reflect: Here the planning, execution, and reflection staged are separated for complex, high-stakes processes requiring error correction.

ReAct offers tactical agility, making it ideal for unpredictable, real-time environments. Plan-and-Execute provides strategic robustness, making it superior for complex tasks where a coherent, long-term plan is paramount.

ii. Tool Integrations

Connect seamlessly with APIs, CRMs, ERPs, knowledge bases, and databases, including retries, error handling, and fallback logic. Tools which related to similar domain and used for similar requests should be handled by the same agent in multi-AI agent settings for effective intent recognition.

iii. Task Decomposition and Sequencing

Break complex objectives into smaller subtasks; use parallel execution where possible and conditional branching to skip unnecessary steps.

iv. Structured Output

Enforce machine-readable formats (e.g., JSON) to ensure accurate integration with downstream systems.

v. Execution Monitoring

Track task status, latency, and costs in real time, adjusting dynamically as needed.

Effective workflows engineering in enterprises improve intent-to-action accuracy, reduce errors, and boost task completion, while parallelism and streamlined steps speed execution and cut costs. Controlled tool access and clear decision logic ensure compliance, security, and safe operations.

6. Ensuring Unbiased Training and Fair Algorithms

Bias in AI agents can originate from training data, model architecture, or deployment processes, leading to inaccurate, discriminatory, or unethical outcomes. For enterprise-grade AI agents, ensuring fairness is both a technical and compliance imperative, especially in regulated industries.

Following strategies can help in avoiding biases and make AI agents ethical:

i. Data Auditing

Training datasets should be evaluated for imbalances, missing representation, or skewed distributions. Techniques like Stratified sampling, synthetic data augmentation, adversarial debiasing can help in creating a more representative dataset.

ii. Algorithmic Fairness Checks

Fairness validation should be build into the model lifecycle. Following actions can help in ensuring algorithms are fair:

- Test outputs across demographic groups.

- Use counterfactual fairness tests (same input, different demographic variables).

- Apply fairness constraints during model training.

iii. Continuous Monitoring

Biases can emerge overtime of the model lifecycle due to changes in data, user behavior, or domain context.

- Deploy bias detection tools and processes into the AIOps pipeline

- Set alerts in observability dashboards to flag fairness deviations early.

iv. Diverse Teams

Involve multidisciplinary, demographically diverse teams in data curation, model development, and review to catch blind spots early and design for inclusivity.

How does it make AI agents enterprise grade:

- Effectiveness – Reduces error rates and improves trust in agent responses.

- Ethics – Ensures compliance with fairness guidelines and regulations like GDPR, EEOC, and the EU AI Act.

- Cost – Prevents expensive rework, legal liabilities, and reputational damage.

By embedding fairness into data pipelines and model evaluation, organisations ensure their AI agents operate responsibly and inclusively at scale.

7. Integrating Human-in-the-Loop for Oversight and Control

Human-in-the-Loop (HITL) ensures enterprise AI agents remain accurate, safe, and aligned with operational and ethical goals. While automation drives efficiency, human oversight is critical for ambiguous, high-stakes, or compliance-sensitive tasks. Following HITL techniques can be game changer for an enterprise AI agent:

- Align goals — Humans and agents pursue the same outcome within the same workflow.

- Define human roles — What humans can do: approve, give feedback, clarify, override.

- Define intervention points — Trigger review on low‑confidence intent, complex decisions, or edge cases beyond training.

- Confidence thresholds — Auto‑route outputs below a set certainty score to human validation.

- Tiered oversight — Agent handles low‑risk routine tasks; escalate by risk/uncertainty to avoid reviewer burnout.

- Provide full context — Seamless handoff with full context: user input, retrieved data, reasoning trace, attempted tool calls, errors, and proposed action.

- Real‑time review — Reach humans where they work: email, dashboards, chat, notifications; set clear SLAs.

- Feedback loops (dual role) — Fix the immediate case and log corrections to improve datasets/prompts/fine‑tunes.

- Access control & privacy — RBAC, need‑to‑know views, redaction for sensitive fields.

- Measure & improve — Track HITL rate, turnaround time, acceptance vs. edits, post‑review error rate.

Impact on enterprise readiness of AI agents:

- Ethics/Security – Ensures oversight for sensitive, regulated, or high-risk cases.

- Effectiveness – Reduces errors and improves intent recognition.

- Speed – Selective intervention avoids unnecessary slowdowns.

- Cost – Minimises costly mistakes while focusing human effort where it matters.

8. Implementing Guardrails for Safe and Aligned Behaviour

AI guardrails are technical, procedural, and ethical safeguards that ensure AI agents operate safely, responsibly, and in alignment with organizational and legal requirements. In enterprise-grade agents, guardrails are not optional—they are the foundation for trustworthy, compliant, and secure operations.

Key Types and Strategies:

- Input Validation & Content Filtering – Clean and validate incoming prompts or data to block malicious inputs, syntax errors, inappropriate content, and prompt injection attacks before reaching the model.

- Output Monitoring & Filtering – Screen responses for toxic language, hallucinations, sensitive information (e.g., PII), or regulatory breaches before release.

- Bias & Hallucination Detection – Identify and correct biased or fabricated outputs, ensuring fairness and factual accuracy. These guardrails ensure responses are grounded in enterprise data (e.g. RAG).

- Privacy & Data Protection – Enforce privacy policies by filtering or masking sensitive data and adhering to GDPR, HIPAA, and industry-specific standards.

- Security & Misuse Prevention – Limit capabilities to approved functions, detect unauthorized use, and apply rate-limiting to control cost and prevent abuse.

- Human-in-the-Loop & Overrides – Route high-risk or ambiguous cases to human review with clear intervention options.

- Procedural Guardrails – Apply governance processes, audits, and compliance checks alongside technical controls.

Impact:

- Effectiveness – Narrows decision scope to reduce hallucinations and harmful outputs.

- Speed – Real-time safeguards with minimal latency overhead.

- Cost – Prevents expensive remediation from unsafe actions.

- Ethics/Security – Maintains fairness, compliance, and trust.

In practice, guardrails should be layered across input, reasoning, and output stages, protecting the entire AI lifecycle.

9. Observability, Explainability and Accountability in AI Agents

In enterprise-grade AI agents, observability, explainability, and accountability form the backbone of operational trust and long-term reliability. They ensure that every decision, action, and outcome is transparent, traceable, and improvable, enabling operational reliability, compliance, and user trust.

Key Strategies are as below:

- Logging and traces: Capture standardised logs and traces for every step, from the user prompt to the final output, including layered prompting, tool calls, retrieval results, and decision branches.

- Complete Workflow Visibility: Record each action in sequence to enable full visibility into agent behaviour and make root cause analysis faster and more accurate.

- Layered Prompting Transparency: Break down reasoning into clear stages; log intermediate outputs so operators understand the contribution of each stage.

- Chain-of-Thought Tracing: Expose reasoning traces in a controlled and safe format, allowing humans to understand why a decision was made without revealing sensitive or exploitable prompts.

- Interactive Explanations: Provide users and operators the ability to drill into responses, showing which data sources, tools, or retrieved documents influenced the result.

- Accountability Records: Document who (or what) made each decision, the time, the applied policies, and any human overrides for compliance and governance.

- Metrics-Driven Monitoring: Track key performance indicators (KPIs) such as intent recognition accuracy, latency, token usage, task completion rate, error rate, hallucination frequency, and user satisfaction scores to measure performance and trustworthiness over time.

- Continuous Feedback Loop: Feed observability insights into retraining, fine-tuning, or prompt refinement to reduce drift, improve accuracy, and enhance system reliability over time.

Impact on AI Agents:

- Effectiveness: Improves debugging, reduces errors, and sustains accuracy.

- Speed: Faster resolution of failures or anomalies.

- Cost: Prevents repeated inefficiencies by identifying and fixing issues early.

- Ethics/Security: Ensures compliance, fairness, and trust through traceable decision-making.

10. Securing AI Agents Against Adversaries

Enterprise-grade AI agents operate in environments where malicious actors can exploit vulnerabilities in prompts, APIs, integrations, and data flows. Securing these agents is not optional but critical to protecting sensitive information, maintaining trust, and ensuring operational continuity.

Key Threat Vectors & Strategies:

Figure: Securing AI Agents

- Prompt Injection & Indirect Injection Attacks: Attackers embed malicious instructions in inputs or external content (e.g., retrieved documents) to manipulate the model.

Mitigation: Apply input sanitization, strict parsing, zero-trust architecture and allowlist-based tool invocation. - Data Leakage & Context Poisoning: Sensitive information from prior sessions or external sources can unintentionally appear in outputs.

Mitigation: Implement context isolation between sessions, redact PII, and enforce domain separation in multi-agent systems. - Tool & API Misuse: If an agent has connected tools (databases, APIs, actuators), an attacker may trick it into unauthorized actions.

Mitigation: Enforce role-based access control (RBAC), scope-limited API keys, zero-trust architecture and human approval for high-impact operations. - Supply Chain Attacks (Plugins/Integrations): Malicious or compromised third-party components can be entry points.

Mitigation: Establish data provenance, conduct vulnerability analysis, vet and sandbox plugins, implement zero-trust architecture and behaviour monitoring for anomalous calls. - Model Theft & Adversarial Queries: Attackers can extract model weights or prompt logic through repeated queries.

Mitigation: Apply rate-limiting, output watermarking, and anomaly detection. - Monitoring & Threat Intelligence: Continuously log agent actions, detect abnormal patterns (e.g., unexpected tool usage), and integrate with SIEM/SOC workflows.

Impact on AI Agents:

- Effectiveness: Agents remain accurate and unmanipulated by malicious inputs.

- Speed: Real-time security checks prevent operational downtime.

- Cost: Avoids costly incident response and data breach remediation.

- Ethics/Security: Protects user trust, privacy, and compliance with regulations like GDPR or HIPAA.

In practice, securing AI agents requires a layered defense — combining preventive controls, runtime monitoring, and rapid incident response to address evolving adversarial threats.

Conclusion

Creating AI agents for enterprise requires them to be effective, accurate, fast, cost-efficient, secure and ethical. This requires a balanced, system-wide approach. This means combining the right architecture, optimised context management, model efficiency techniques, reliable workflows, fairness safeguards, and continuous security.

The goal is sustainable scalability, where speed doesn’t reduce accuracy, cost savings don’t compromise safety, and automation is supported by human oversight for high-stakes decisions. Done right, these agents are production-ready, reliable, and enhance value for enterprises.